Every year, Data Centre World London 2026 offers a useful snapshot of where the industry stands. This year, however, felt different.

The conversations across the exhibition floor, panel discussions, and technical sessions all pointed to the same conclusion: Data Centres are entering a new infrastructure era shaped by artificial intelligence. The infrastructure built for cloud computing is no longer enough for the AI era.

Artificial intelligence is forcing the data-centre industry to redesign itself. Not gradually, Fundamentally.

For the past decade, cloud computing drove most of the industry’s growth. But AI - particularly large-scale model training and inference workloads - is changing the equation. It is not just increasing compute demand; it is forcing operators to rethink power density, cooling strategies, grid capacity, and energy transparency.

The density shift is dramatic. Traditional enterprise racks operated at around 5-10 kW, while modern AI clusters now regularly exceed 100 kW per rack, a whole new territory in terms of infrastructure requirements, making operators rethink everything from cooling architectures to energy monitoring systems.

In many ways, the modern data centre is evolving from what used to be an IT facility is rapidly becoming a high-performance energy platform.

The Expanding Data Centre Economy with Limited Power Infrastructure

Demand for data-centre capacity continues to accelerate globally. The drivers are familiar - cloud services, streaming, fintech, and enterprise digitalisation - but AI has now added a powerful new layer.

With hyperscale cloud providers accelerating AI infrastructure deployment, industry investment in data-centre capacity could be $ 3 to 7 Trillion globally by the end of the decade. Mckinsey

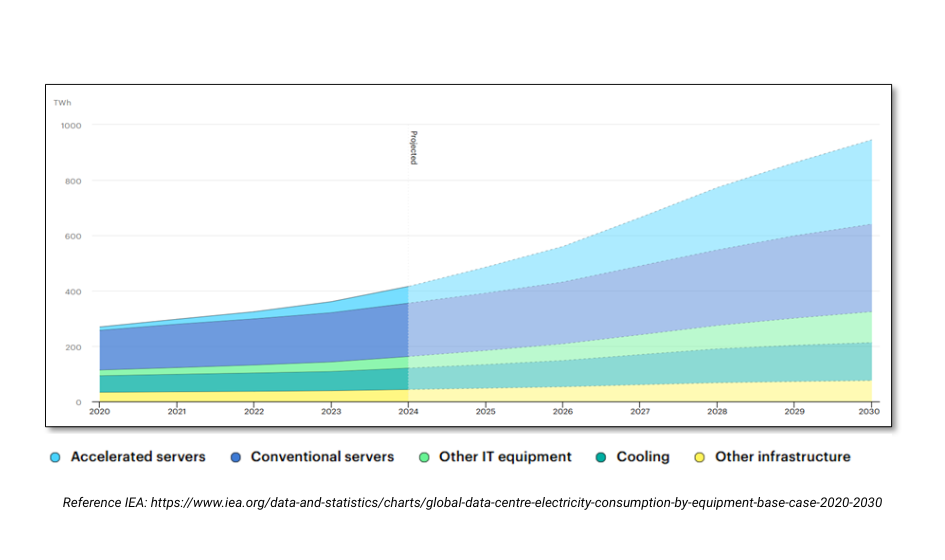

What makes this expansion unique is the sheer energy footprint of next-generation compute. Global estimates suggest data-centre electricity consumption could double by the end of the decade, fueled by GPU-based workloads and advent of accelerated computing. IEA

But one of the less visible but most significant shifts emerging across the industry is the move toward what many developers are now calling “power-limited data centres.”

In locations such as the UK, Ireland, Frankfurt and Amsterdam, grid connection timelines can now stretch five to eight years, longer than the time required to construct the facility itself.

As a result, developers are increasingly designing campuses around available power capacity rather than physical space. This has triggered a new focus on:

• Higher utilisation of existing electrical infrastructure

• Advanced energy monitoring and optimisation

• Scalable power architectures that maximise available grid capacity…

Data centres are no longer just a real estate project, they are evolving to become a long-term energy infrastructure project.

This shift is beginning to reshape how facilities are designed and how energy is managed across the entire ecosystem.

AI Infrastructure and Operations are Changing the Rules

AI Training

Training large AI models requires enormous clusters of GPUs running continuously for extended periods. These deployments are typically located in large hyperscale campuses where operators can provide:

• Extremely high power capacity

• Advanced cooling systems

• Low-latency networking

Rack densities that once averaged 5–10 kW are now reaching 80–120 kW per rack in AI training environments. This has significant implications for the electrical backbone of the facility.

AI Inference

Inference workloads, where trained models are deployed into real-world applications, they follow a different pattern.

These workloads are often distributed across regional or edge facilities, where latency becomes critical. Applications such as AI-powered search, recommendation engines, autonomous systems, and generative AI services rely on infrastructure closer to end users (low latency).

The result is a dual infrastructure trend:

| Infrastructure Type | Typical Workload |

|---|---|

| Hyperscale AI campuses | Model training |

| Regional / edge facilities | AI inference |

This combination is driving unprecedented investment across both hyperscale and distributed data-centre architectures.

The Hidden Transformation: Electrical Infrastructure

While GPUs and AI models often capture headlines, one of the most important shifts happening inside data centres is the transformation of electrical infrastructure.

One of the less discussed consequences of AI infrastructure is its impact on electrical engineering within the data centre. As compute density increases, power delivery systems must evolve and catch up just as quickly. The focus is shifting from simply providing backup power to managing highly dynamic energy loads, where visibility, monitoring, and real-time optimisation become critical.

Recent infrastructure forecasts suggest AI workloads could represent more than 50% of total data-centre power consumption by 2030, fundamentally reshaping how facilities are designed and powered. Higher rack densities and fluctuating AI workloads require more resilient and flexible power architectures. According to analysis from the International Energy Agency, global electricity consumption from data centres could exceed 1,000 TWh by end of the decade, driven largely by AI.

Several trends stood out during discussions at Data Centre World London 2026: IEA Chart

Next Generation UPS Architectures

UPS systems are evolving to support higher densities and dynamic workloads.

Modular designs allow operators to scale capacity alongside infrastructure expansion while maintaining high efficiency. To accommodate these tough and at times conflicting requirements, UPS technology has been going through the fundamental tech changes, like moving to SIC (Silicon Carbide), that offers high efficiency, better thermal management, and high power densities. The latest UPSs like Socomec Modulys range offer perfect balance between the flexibility and reliability, while improving the overall system efficiency significantly improving the efficiency and ROI.

Granular Energy Monitoring

Energy visibility is becoming essential. Operators increasingly rely on detailed power monitoring at circuit and rack level to understand how workloads affect energy consumption across the facility.

This insight allows teams to optimise performance, improve efficiency, and meet regulatory reporting requirements. Modern systems like DIRIS Digiware are one of the most versatile and intelligent power monitoring system available that offer full downstream measurement with on the go deployments.

The EU Energy Efficiency Directive (EED) has made granular energy monitoring not just beneficial, but mandatory for data centres with IT power demand of at least 500kW.

The EU Energy Efficiency Directive (EED) has made granular energy monitoring not just beneficial, but mandatory for data centres with IT power demand of at least 500kW.

Under the directive's reporting requirements, operators must submit detailed annual reports to the European database by 15 May each year, covering key performance indicators including total energy consumption, Power Usage Effectiveness (PUE), renewable energy factor, and waste heat utilisation.

This level of regulatory compliance demands precise, real-time visibility into energy flows across every layer of the facility infrastructure. Without granular monitoring capabilities, data centres cannot accurately calculate the required metrics or demonstrate compliance with the directive's transparency requirements.

One of the most widely discussed topics during Data Centre World London 2026 was the growing tension between data-centre expansion and power availability.

Recent analysis by McKinsey & Company suggests that AI-driven infrastructure could require tens of gigawatts of additional data-centre capacity globally within the next decade. The demand is projected to be around 220GW and at least 50% of that is expected to be AI.

This is going to require huge upgrades to existing power ecosystem (Generation, Transmission & Distribution). Across several European markets, grid capacity constraints are beginning to slow development timelines. In some locations, operators face connection delays of five years or more.

To address this challenge, many operators are exploring alternative energy strategies:

• On-Site generation

• BESS Systems

• Renewable energy agreements

• Waste-heat recovery

These approaches are helping the industry balance rapid growth with the realities of energy infrastructure.

Final Thoughts

The data-centre industry has always been about balancing innovation with reliability. What Data Centre World London 2026 showed this year is that the balance is shifting again.

Artificial intelligence is accelerating demand for compute at an unprecedented scale, and the infrastructure supporting it must evolve just as quickly. AI may be driving the current wave of demand, but the real transformation is happening beneath the surface.

Power architecture, energy intelligence, and infrastructure resilience are becoming just as critical as compute performance itself.

The next generation of data centres will not simply host the digital economy - they will determine how efficiently it grows.